Bernd Dorn was presenting what LovelySystems is doing with Google AppEngine and how you work around certain limitations.

Limitations of GAE

The App Engine now has several limits:

- Code files 1000 x 10MB (max. 150MB)

- Static Files 1000 x 10MB

- Request size 10MB, duration 30s

- API-Call size limit 1 MB (cannot fetch data objects bigger than 1 MB)

- 30 reqs in parallel (including cron-jobs, everything you do, not so much a problem for serving though)

- Limited query and indexing facilities (huge problem, because of BigTable)

All of these limits are fixed (correct?), you cannot pay for getting more.

Working around the static file limits

- They use S3 Cloudfront for serving static file

- has less latency in Europe

- e.g. with Pyjamas or GWT the limit of 1000 files is quickly reached. You end up with a lot of ressources which need to be deployed (e.g. TinyMCE alone is 500 files).

Working around code size limits

First they had problems with Django and the size of the files, not the number. If you zip the files then you might end up with less files but they are too big. This problem has been resolved in the meanwhile though.

They also created a buildout recipe called lovely.recipe:eggbox which you can use like this:

recipe = lovely.recipe:eggbox

scripts =

eggs = Django

zope.contenttype

lovely.gae

...

location = ${buildout:directory}/app/packages

zip = True

excludes = ...

Working around the request limits

The major problem are images which you might have on content management systems. The problem here is maybe not the 10 MB limit but the 30s duration.

The solution they use is to store that content on S3. Image processing is done on a specialized proxy which is running on Amazon EC2.

File Upload

This works as follows now:

- Browser displays upload form by getting an upload policy from the GAE app

- Browser posts the file directly to Amazon S3 along with a redirect url which was defined in the policy

- S3 redirects the post after the finished upload to a special url on the GAE app

- The GAE app now fetches metadata, calculates the mime-type and creates a new file object in the datastore. This mime-type calculation is done via a Range: header on the image data to properly guess the mime-type.

- The meta information is now sent back to the client where it is further processed

Data Migration

- AppEngine has no means to do data migration (like renaming all usernames to lowercase).

- LovelySystems solves this by a special view that executes python code. No application is needed.

- Client script walks through all entities until view returns False.

- State is stored in memcache

- Work on entity level – so no update on automatic values. e.g. datetimes.

Basically the same view is called over and over again, always processing up to 1000 items which are then migrated.

Question: How do you test all this? Answer later

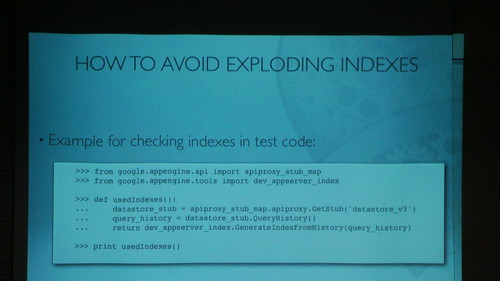

How to avoid exploding indexes?

This is read from the generated index.yaml file:

That way you can check if everything is tested.

One solution is to use one single ListProperty for querying and sorting by using prefixes. GAE does not need to build custom indexes if queries just use one attribute for searching.

<br />class Document(db.Model):<br /> terms = db.StringListProperty()<br /> title = db.StringProperty()<br /> body = db.TextProperty()<br /><br />def index_doc(d):<br /> d.terms = map(lambda t: u'T:%s' %t, d.body.split())<br /> d.terms.append(u'Z:%s' %d.title) # for descrending sort<br /> ... missing is ascending sort<br /> d.put()<br /><br /><a href="http://www.flickr.com/photos/mrtopf/3675852614/" title="Google App Engine and it's limitations by MrTopf, on Flickr"><img src="http://farm3.static.flickr.com/2536/3675852614_5af376f8a3.jpg" alt="Google App Engine and it's limitations" width="500" height="281" /></a><br /><br />

If we order or filter by a second attribute we need one index per AND filter!

And full text searches are done on single attribute queries by LovelySystems.

Development setup and how they test things

- Always use zc.buildout for sandbox build (collecting eggs, creating launchers)

- Use zope.testing as test framework with special GAE db layer

- Use Google App Engine Helper for Django (they don’t use much of Django though, e.g. not the models, just auth and templating)

Test layer for zope.testing creates an in-memory datastore for each test. In-memory is important to make testing fast.

How they usually deploy:

- 3 apps deployed on GAE

- Dev: for ad-hoc testing from svn via appcfg update

- Beta: deployment script checks out a tag and creates a new project directory and uploads to beta app

- Production: same as beta but with stable release only

Question: Did you look into using solr on e.g. an EC2 instance for full text indexing?

Answer: Yes, but you always have to do HTTP requests which slows things down. But it has been done.

Question: Why not move everything over to EC2?

Answer: First of all it’s cheaper and deployment/maintenance is all easier.

Technorati-Tags: europython, europython2009, python, gae, google, limits, lovelysystems